Key takeaways:

-

Effective AI governance requires shared responsibility across the organization. Collaboration between data strategy, privacy, IT, and governance teams is essential to mitigate risks while enabling responsible innovation.

-

Most organizations are still early in this journey. Many companies are still developing their AI governance frameworks due to gaps in ownership, expertise, or internal resources needed to monitor and audit AI systems.

-

Tools like intake forms and surveys can help manage AI use cases. These tools allow organizations to track how AI is being used, identify potential privacy concerns, and create more structured approval processes.

In 2025, media coverage has highlighted the rapidly expanding capabilities of artificial intelligence. AI has the potential to transform enterprise operations — from routine tasks performed by entry-level employees to large-scale strategic initiatives.

However, alongside these opportunities come important risks, particularly around privacy and data protection. A KPMG’s 2023 AI Risk Survey found that AI adoption is outpacing companies’ ability to fully assess and manage risk.

Only 19% of respondents said they have the internal expertise to conduct audits of their AI models, and 53% cited a need for more skilled resources as the leading factor limiting their ability to review AI-related risks.

It’s the responsibility of everybody across the organization that processes data or deals with data to adopt the common principles.

Sarah Stalnecker, New Balance Athletics

“It’s the responsibility of everybody across the organization that processes data or deals with data to adopt the common principles,” Sarah Stalnecker, Global Director of Data Privacy at New Balance Athletics, said.

During a panel hosted by the Enterprise Data Strategy and Data Privacy Boards, Sarah continued, “I think that the primary challenge is how do you make it the responsibility not of a singular office, but the responsibility of the entire associate base?”

In this blog, we explore how panelists believe privacy, data strategy, and governance leaders can collaborate to promote responsible AI innovation across their organizations.

Who’s Responsible For Overseeing AI Governance?

One major theme highlighted in the KPMG survey was the lack of clear ownership when it comes to AI risk management.

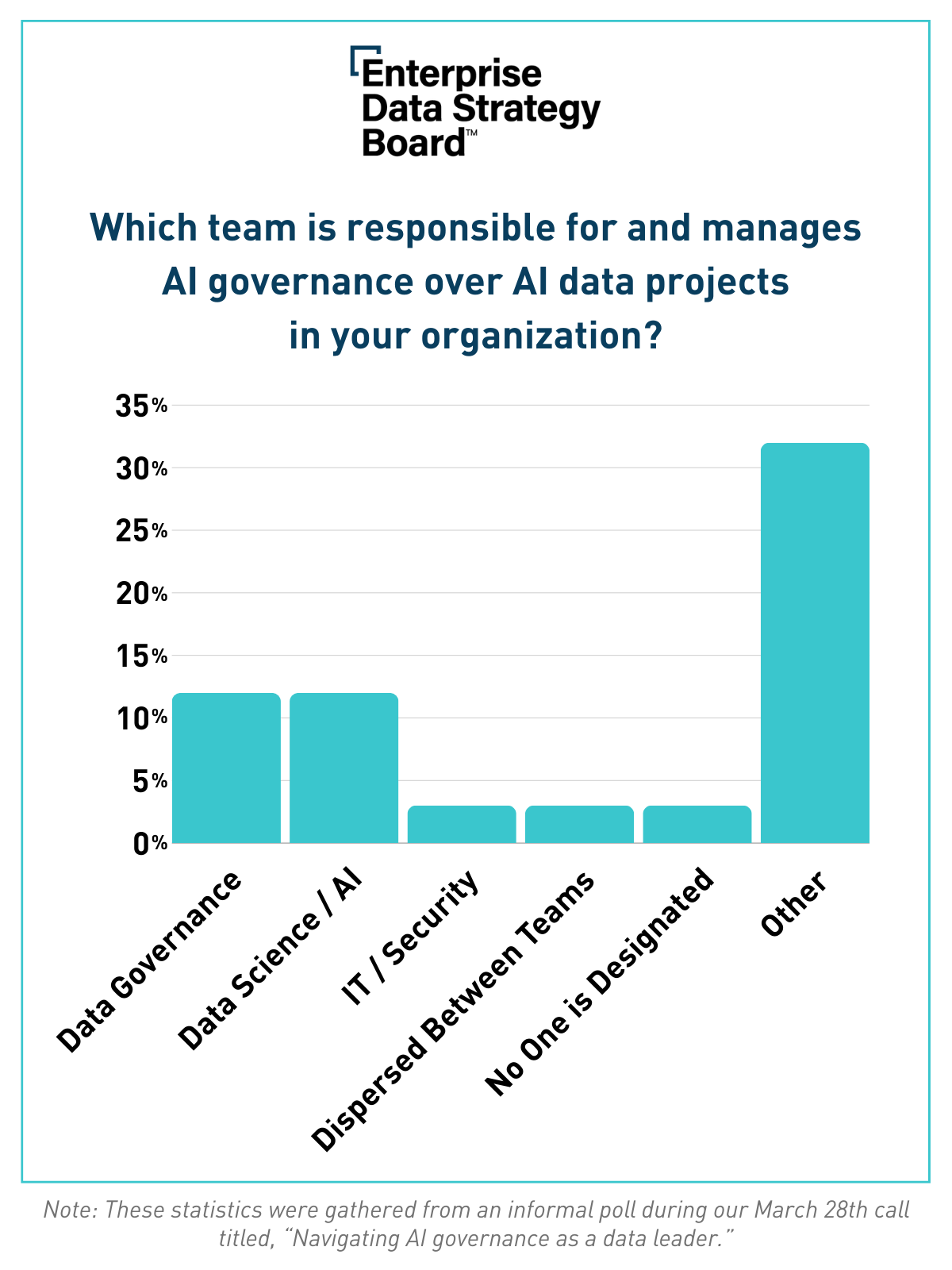

When Enterprise Data Strategy Board leaders were privately asked where AI governance sits within their organizations, responses varied widely.

Most members did not agree on a single ownership model. However, about 20% reported that AI governance sat within Data Science or Governance.

Several members also noted that their organizations rely on cross-functional teams that include stakeholders from legal, privacy, security, and other risk-related functions to ensure multiple perspectives are considered, even if formal ownership remains unclear.

The Value of Cross-Departmental Collaboration in Managing AI Risk

During the panel discussion, John Tucker, Director of Enterprise Data Governance at McDonald’s, shared how his organization has approached AI governance by forming a collaborative working group.

This team includes representatives from governance, data strategy, global technology, data architecture, and key business stakeholders involved in customer experience.

John also emphasized the importance of maintaining strong collaboration with data science teams in order to clearly communicate both the capabilities and potential risks of AI models.

Organizations are currently exploring numerous AI use cases aimed at improving both customer and employee experiences. However, John explained that it is equally important to understand how employees are already using AI tools in their day-to-day workflows.

A more obvious model is OpenAI’s ChatGPT, which has quickly gained over 100 million users shortly after launch, many of whom are relying on the chatbot to boost work productivity. A survey by Business.com found that 57% of U.S. employees have used ChatGPT, and 16% regularly use it in their roles.

Still, these seemingly innocent uses of AI models could unintentionally expose companies to significant privacy risks, and some are already experiencing the pitfalls.

Cyberhaven, for example, recently reported that roughly 11% of data employees put into ChatGPT is confidential.

If you’re a business person filling this out, you start to second guess yourself whether or not you should be doing these things.

John Tucker, McDonald’s

Using Intake Forms to Track and Manage AI Use Cases

To better manage and mitigate these risks, John said, “We created an intake form, and it took quite a bit of information on tools that had AI embedded in them that people want to use and even models that people are leveraging with third-party vendors to provide any sort of insights.”

Information collected through these intake forms is shared with the cross-functional working group, allowing them to identify potential risks and ensure responsible deployment.

Additionally, John shared that McDonald’s launched a survey specific to generative AI use cases throughout the business, adding, “If you’re a business person filling this out, you start to second guess yourself whether or not you should be doing these things.”

Furthermore, McDonald’s is developing a use case repository and assessment registry to improve transparency around approved AI tools and their evaluations.

This approach helps demonstrate the business value of AI applications while making it easier to review and approve future use cases.

Balancing the Benefits and Risks of AI Innovation

Panelists also discussed the growing challenge of balancing AI innovation with the need for responsible governance.

The overall vision that customers have of AI is very different from the vision we have of the control functions for AI. Because of that tension, I think we have to be intentional about what we’re doing with it and how we allow our teams to innovate with it.

Rebecca Whitaker, Principal Financial Group

Rebecca Whitaker, Assistant Director of Privacy and Data Protection Officer at Principal Financial Group, noted a gap between the public’s perception and the reality of AI risks.

“I think that’s the one thing that delineates AI from anything else we’ve ever seen,” Rebecca said. “There is such a public perception that AI is something that’s a net positive for them, not realizing, obviously, the massive amount of work that goes into mitigating the risk to them.”

Rebecca described consent as something of an illusion, where consumers often agree to share data without fully understanding how it will ultimately be used.

“I think that the overall vision that customers have of AI is very different from the vision we have of the control functions for AI,” Rebecca said. “Because of that tension, I think we have to be intentional about what we’re doing with it and how we allow our teams to innovate with it.”

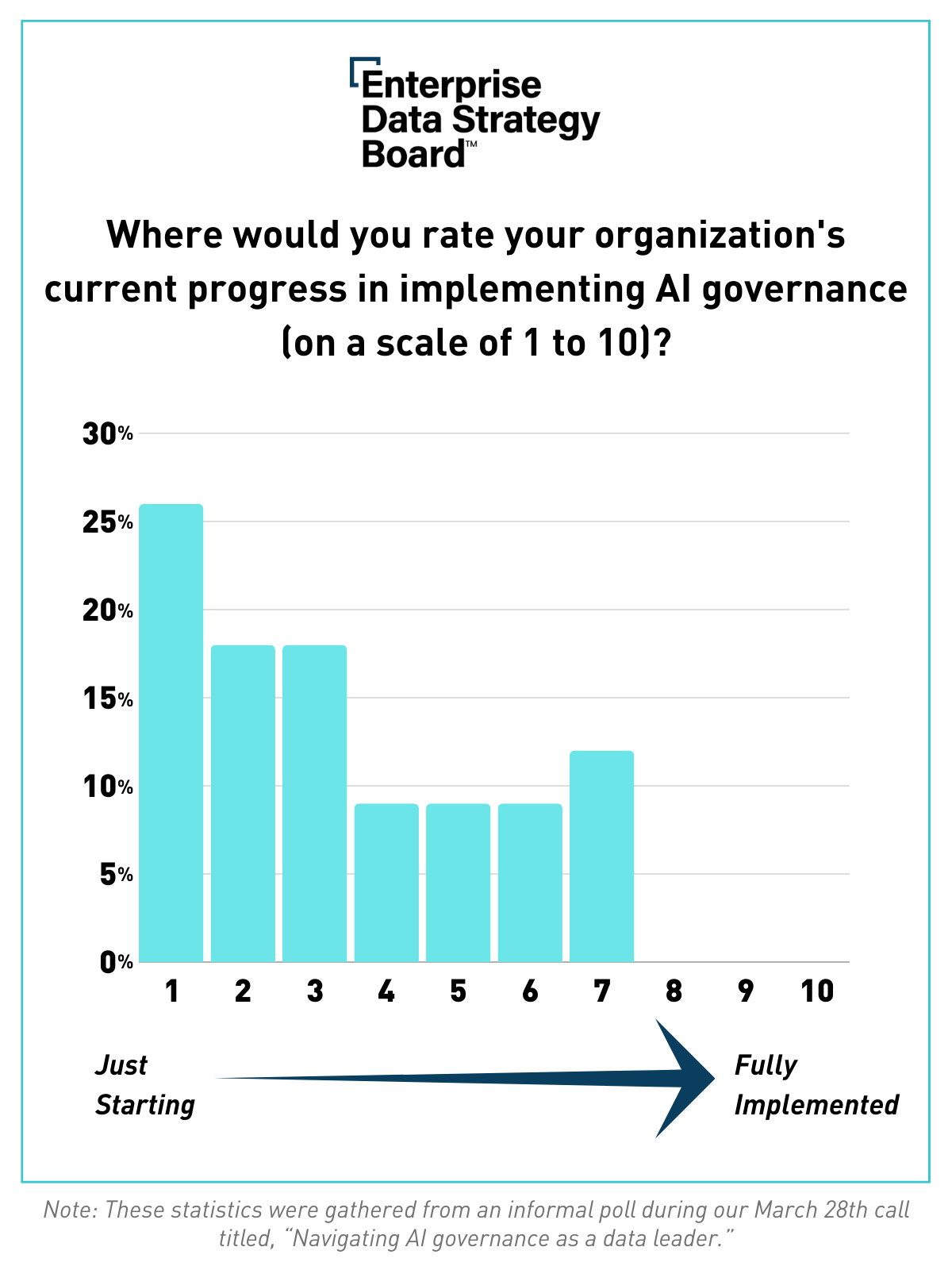

Many companies are still at an early stage of building AI governance frameworks.

During a confidential call, Enterprise Data Strategy Board members rated their organizations’ AI governance maturity on a scale from one to ten.

Most members on the line, who work at some of the world’s biggest companies, graded their progress at one or “just starting.” No one gave their organization above a seven.

While the scale of risk has increased due to the volume of data used to train AI systems and the level of public attention, the fundamental principles of data governance and privacy protection remain largely the same.

Rebecca also shared that Principal Financial Group already had many policies and guidelines in place that could be applied to AI-related risks.

Still, she acknowledged that organizations will need to continue adapting as technology evolves and new regulations emerge.

“I think, as usual, most companies and most legislatures are going to be a little bit behind because the technology is changing so quickly,” Rebecca said. “We have to pivot very fast, and more so than anything else. This feels different than the immediacy that was posed to us when GDPR was crested above the horizon.”

Benchmarking with Data Strategy and Privacy Leaders

Artificial intelligence has accelerated the need for greater transparency around how organizations collect, process, and protect personal data.

Panelists shared many additional insights on how analytics and privacy leaders can work together to support innovation while safeguarding consumer information. You can catch all the insights by downloading the panel recording here.